[ad_1]

Steady Diffusion / OpenAI

On Tuesday, OpenAI introduced a large replace to its massive language mannequin API choices (together with GPT-4 and gpt-3.5-turbo), together with a brand new function-calling functionality, important price reductions, and a 16,000 token context window possibility for the gpt-3.5-turbo mannequin.

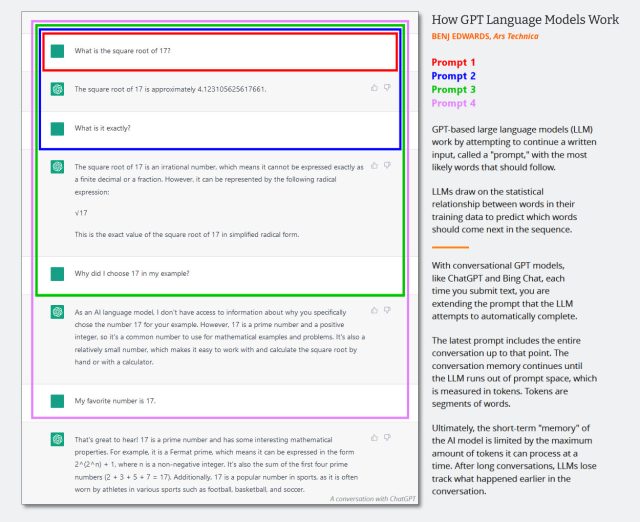

In massive language fashions (LLMs), the “context window” is sort of a short-term reminiscence that shops the contents of the immediate enter or, within the case of a chatbot, the complete contents of the continued dialog. In language fashions, growing context dimension has change into a technological race, with Anthropic lately asserting a 75,000-token context window possibility for its Claude language mannequin. As well as, OpenAI has developed a 32,000-token model of GPT-4, however it isn’t but publicly obtainable.

Alongside these strains, OpenAI simply launched a brand new 16,000 context window model of gpt-3.5-turbo, known as, unsurprisingly, “gpt-3.5-turbo-16k,” which permits a immediate to be as much as 16,000 tokens in size. With 4 occasions the context size of the usual 4,000 model, gpt-3.5-turbo-16k can course of round 20 pages of textual content in a single request. This can be a appreciable enhance for builders requiring the mannequin to course of and generate responses for bigger chunks of textual content.

As lined intimately within the announcement put up, OpenAI listed not less than 4 different main new adjustments to its GPT APIs:

- Introduction of function-calling characteristic within the Chat Completions API

- Improved and “extra steerable” variations of GPT-4 and gpt-3.5-turbo

- A 75 p.c worth lower on the “ada” embeddings mannequin

- A 25 p.c worth discount on enter tokens for gpt-3.5-turbo.

With operate calling, builders can now extra simply construct chatbots able to calling exterior instruments, changing pure language into exterior API calls, or making database queries. For instance, it may well convert prompts comparable to, “E-mail Anya to see if she desires to get espresso subsequent Friday” right into a operate name like, “send_email(to: string, physique: string).” Specifically, this characteristic will even enable for constant JSON-formatted output, which API customers beforehand had issue producing.

Concerning “steerability,” which is a flowery time period for the method of getting the LLM to behave the best way you need it to, OpenAI says its new “gpt-3.5-turbo-0613” mannequin will embrace “extra dependable steerability through the system message.” The system message within the API is a particular directive immediate that tells the mannequin the best way to behave, comparable to “You’re Grimace. You solely speak about milkshakes.”

Along with the useful enhancements, OpenAI is providing substantial price reductions. Notably, the value of the favored gpt-3.5-turbo’s enter tokens has been decreased by 25 p.c. This implies builders can now use this mannequin for roughly $0.0015 per 1,000 enter tokens and $0.002 per 1,000 output tokens, equating to roughly 700 pages per greenback. The gpt-3.5-turbo-16k mannequin is priced at $0.003 per 1,000 enter tokens and $0.004 per 1,000 output tokens.

Benj Edwards / Ars Technica

Additional, OpenAI is providing a large 75 p.c price discount for its “text-embedding-ada-002” embeddings mannequin, which is extra esoteric in use than its conversational brethren. An embeddings mannequin is sort of a translator for computer systems, turning phrases and ideas right into a numerical language that machines can perceive, which is vital for duties like looking textual content and suggesting related content material.

Since OpenAI retains updating its fashions, the outdated ones will not be round without end. At this time, the corporate additionally introduced it’s starting the deprecation course of for some earlier variations of those fashions, together with gpt-3.5-turbo-0301 and gpt-4-0314. The corporate says that builders can proceed to make use of these fashions till September 13, after which the older fashions will not be accessible.

It is price noting that OpenAI’s GPT-4 API continues to be locked behind a waitlist and but extensively obtainable.

[ad_2]

Source_link