[ad_1]

Getty Photographs | Benj Edwards

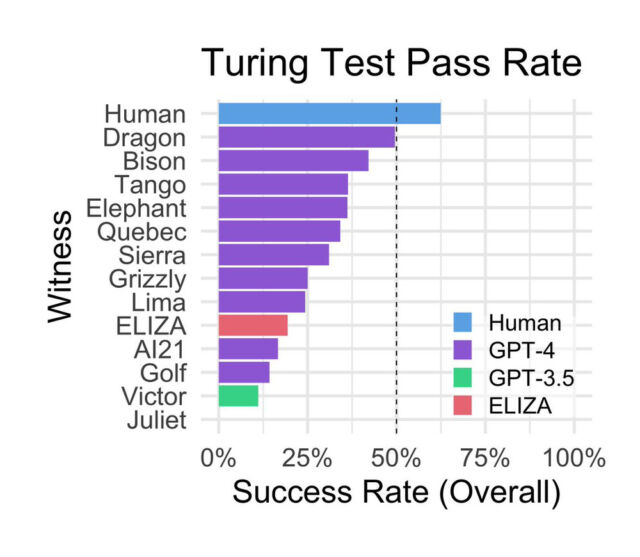

In a preprint analysis paper titled “Does GPT-4 Move the Turing Check?”, two researchers from UC San Diego pitted OpenAI’s GPT-4 AI language mannequin in opposition to human individuals, GPT-3.5, and ELIZA to see which may trick individuals into considering it was human with the best success. However alongside the best way, the research, which has not been peer-reviewed, discovered that human individuals appropriately recognized different people in solely 63 p.c of the interactions—and {that a} Sixties pc program surpassed the AI mannequin that powers the free model of ChatGPT.

Even with limitations and caveats, which we’ll cowl beneath, the paper presents a thought-provoking comparability between AI mannequin approaches and raises additional questions on utilizing the Turing take a look at to guage AI mannequin efficiency.

British mathematician and pc scientist Alan Turing first conceived the Turing take a look at as “The Imitation Recreation” in 1950. Since then, it has grow to be a well-known however controversial benchmark for figuring out a machine’s potential to mimic human dialog. In trendy variations of the take a look at, a human choose usually talks to both one other human or a chatbot with out realizing which is which. If the choose can not reliably inform the chatbot from the human a sure proportion of the time, the chatbot is claimed to have handed the take a look at. The edge for passing the take a look at is subjective, so there has by no means been a broad consensus on what would represent a passing success charge.

Within the current research, listed on arXiv on the finish of October, UC San Diego researchers Cameron Jones (a PhD scholar in Cognitive Science) and Benjamin Bergen (a professor within the college’s Division of Cognitive Science) arrange a web site known as turingtest.stay, the place they hosted a two-player implementation of the Turing take a look at over the Web with the purpose of seeing how properly GPT-4, when prompted other ways, may persuade individuals it was human.

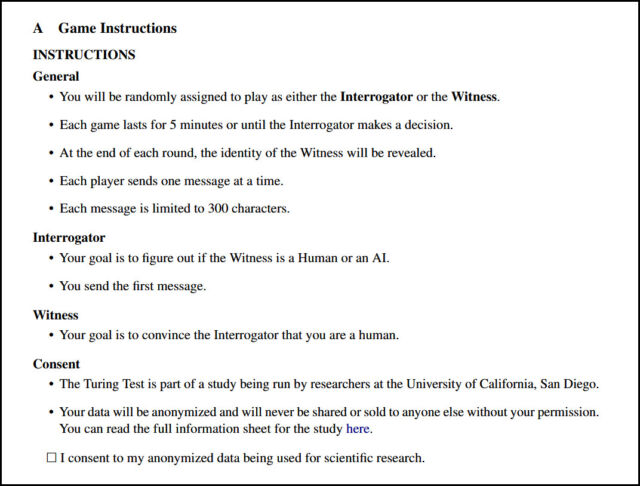

By the positioning, human interrogators interacted with varied “AI witnesses” representing both different people or AI fashions that included the aforementioned GPT-4, GPT-3.5, and ELIZA, a rules-based conversational program from the Sixties. “The 2 individuals in human matches had been randomly assigned to the interrogator and witness roles,” write the researchers. “Witnesses had been instructed to persuade the interrogator that they had been human. Gamers matched with AI fashions had been at all times interrogators.”

The experiment concerned 652 individuals who accomplished a complete of 1,810 classes, of which 1,405 video games had been analyzed after excluding sure situations like repeated AI video games (resulting in the expectation of AI mannequin interactions when different people weren’t on-line) or private acquaintance between individuals and witnesses, who had been typically sitting in the identical room.

Surprisingly, ELIZA, developed within the mid-Sixties by pc scientist Joseph Weizenbaum at MIT, scored comparatively properly in the course of the research, attaining successful charge of 27 p.c. GPT-3.5, relying on the immediate, scored a 14 p.c success charge, beneath ELIZA. GPT-4 achieved successful charge of 41 p.c, second solely to precise people.

GPT-3.5, the bottom mannequin behind the free model of ChatGPT, has been conditioned by OpenAI particularly to not current itself as a human, which could partially account for its poor efficiency. In a submit on X, Princeton pc science professor Arvind Narayanan wrote, “Necessary context in regards to the ‘ChatGPT would not go the Turing take a look at’ paper. As at all times, testing conduct would not inform us about functionality.” In a reply, he continued, “ChatGPT is fine-tuned to have a proper tone, not specific opinions, and many others, which makes it much less humanlike. The authors tried to alter this with the immediate, nevertheless it has limits. One of the simplest ways to fake to be a human chatting is to fine-tune on human chat logs.”

Additional, the authors speculate in regards to the causes for ELIZA’s relative success within the research:

“First, ELIZA’s responses are usually conservative. Whereas this usually results in the impression of an uncooperative interlocutor, it prevents the system from offering specific cues akin to incorrect data or obscure information. Second, ELIZA doesn’t exhibit the form of cues that interrogators have come to affiliate with assistant LLMs, akin to being useful, pleasant, and verbose. Lastly, some interrogators reported considering that ELIZA was “too unhealthy” to be a present AI mannequin, and due to this fact was extra more likely to be a human deliberately being uncooperative.”

In the course of the classes, the commonest methods utilized by interrogators included small discuss and questioning about information and present occasions. Extra profitable methods concerned talking in a non-English language, inquiring about time or present occasions, and straight accusing the witness of being an AI mannequin.

The individuals made their judgments based mostly on the responses they obtained. Apparently, the research discovered that individuals based mostly their selections totally on linguistic model and socio-emotional traits, reasonably than the notion of intelligence alone. Individuals famous when responses had been too formal or casual, or when responses lacked individuality or appeared generic. The research additionally confirmed that individuals’ schooling and familiarity with massive language fashions (LLMs) didn’t considerably predict their success in detecting AI.

Jones and Bergen, 2023

The research’s authors acknowledge the research’s limitations, together with potential pattern bias by recruiting from social media and the dearth of incentives for individuals, which can have led to some individuals not fulfilling the specified position. In addition they say their outcomes (particularly the efficiency of ELIZA) could help frequent criticisms of the Turing take a look at as an inaccurate strategy to measure machine intelligence. “Nonetheless,” they write, “we argue that the take a look at has ongoing relevance as a framework to measure fluent social interplay and deception, and for understanding human methods to adapt to those units.”

[ad_2]

Source_link